Content from Python Fundamentals

Last updated on 2024-02-23 | Edit this page

Estimated time: 30 minutes

Overview

Questions

- What basic data types can I work with in Python?

- How can I create a new variable in Python?

- How do I use a function?

- Can I change the value associated with a variable after I create it?

Objectives

- Assign values to variables.

Variables

Any Python interpreter can be used as a calculator:

OUTPUT

23This is great but not very interesting. To do anything useful with

data, we need to assign its value to a variable. In Python, we

can assign a value to a variable, using the equals sign

=. For example, the GDP per capita of the UK is

approximately $46510. We could track this by assigning the value

46510 to a variable gdp_per_capita:

From now on, whenever we use gdp_per_capita, Python will

substitute the value we assigned to it. In layperson’s terms, a

variable is a name for a value.

In Python, variable names:

- can include letters, digits, and underscores

- cannot start with a digit

- are case sensitive.

This means that, for example:

-

gdp_per_capita_2021is a valid variable name, whereas2021_gdp_per_capitais not. -

gdp_per_capitaandGDP_per_capitaare different variables.

Types of data

Python knows various types of data. Three common ones are:

- integer numbers,

- floating point numbers, and

- strings.

In the example above, variable gdp_per_capita has an

integer value of 46510. If we want to more precisely track

the GDP of the UK, we can use a floating point value by executing:

To create a string, we add single or double quotes around some text. We could track the language code of a country by storing it as a string:

Using Variables in Python

Once we have data stored with variable names, we can make use of it in calculations. We may want to store our country’s raw GDP value as well as the GDP per capita:

We also might decide to add a prefix to our language identifier:

Built-in Python functions

To carry out common tasks with data and variables in Python, the

language provides us with several built-in functions. To display information to

the screen, we use the print function:

OUTPUT

46510.28

ISO_engWhen we want to make use of a function, referred to as calling the

function, we follow its name by parentheses. The parentheses are

important: if you leave them off, the function doesn’t actually run!

Sometimes you will include values or variables inside the parentheses

for the function to use. In the case of print, we use the

parentheses to tell the function what value we want to display. We will

learn more about how functions work and how to create our own in later

episodes.

We can display multiple things at once using only one

print call:

OUTPUT

ISO_eng GDP per capita is USD $ 46510.28We can also call a function inside of another function call. For example,

Python has a built-in function called type that tells you a

value’s data type:

OUTPUT

<class 'float'>

<class 'str'>Moreover, we can do arithmetic with variables right inside the

print function:

OUTPUT

GDP in USD $ 3131537152400.0The above command, however, did not change the value of

gdp_per_capita:

OUTPUT

46510.28To change the value of the gdp_per_capita variable, we

have to assign gdp_per_capita a new value

using the equals = sign:

OUTPUT

GDP per capita is now: 46371.45Variables as Sticky Notes

A variable in Python is analogous to a sticky note with a name written on it: assigning a value to a variable is like putting that sticky note on a particular value.

Using this analogy, we can investigate how assigning a value to one variable does not change values of other, seemingly related, variables. For example, let’s store the country’s GDP in its own variable:

PYTHON

# There are 67330000 people in the UK

gdp = 67330000 * gdp_per_capita

print('GDP per capita: USD $', gdp_per_capita, 'Raw GDP: USD $', gdp)OUTPUT

GDP per capita: USD $ 46371.45 Raw GDP: USD $ 3122189728500.0Everything in a line of code following the ‘#’ symbol is a comment that is ignored by Python. Comments allow programmers to leave explanatory notes for other programmers or their future selves.

Similar to above, the expression

67_330_000 * gdp_per_capita is evaluated to

3122189728500.0, and then this value is assigned to the

variable gdp (i.e. the sticky note gdp is

placed on 3122189728500.0). At this point, each variable is

“stuck” to completely distinct and unrelated values.

Let’s now change gdp_per_capita:

PYTHON

gdp_per_capita = 45_000.00

print('GDP per capita is now: USD $', gdp_per_capita, 'But raw GDP is still: USD $', gdp)OUTPUT

GDP per capita is now: USD $ 45000.0 But raw GDP is still: USD $ 3122189728500.0Since gdp doesn’t “remember” where its value comes from,

it is not updated when we change gdp_per_capita.

OUTPUT

`mass` holds a value of 47.5, `age` does not exist

`mass` still holds a value of 47.5, `age` holds a value of 122

`mass` now has a value of 95.0, `age`'s value is still 122

`mass` still has a value of 95.0, `age` now holds 102OUTPUT

Hopper GraceKey Points

- Basic data types in Python include integers, strings, and floating-point numbers.

- Use

variable = valueto assign a value to a variable in order to record it in memory. - Variables are created on demand whenever a value is assigned to them.

- Use

print(something)to display the value ofsomething. - Use

# some kind of explanationto add comments to programs. - Built-in functions are always available to use.

Content from Reading Tabular Data into DataFrames

Last updated on 2024-02-23 | Edit this page

Estimated time: 60 minutes

Overview

Questions

- How can I read tabular data in Python?

- How can I get information about the type of data I have read in?

Objectives

- Explain what a library is and what libraries are used for.

- Import a Python library (

pandas) and use the functions it contains. - Read tabular data from a file into a program.

- Select individual values and subsections from data.

- Get some basic information about a Pandas DataFrame.

- Perform operations on arrays of data.

Words are useful, but what’s more useful are the sentences and stories we build with them. Similarly, while a lot of powerful, general tools are built into Python, specialized tools built up from these basic units live in libraries that can be called upon when needed.

Loading data into Python using the Pandas library.

To begin processing the different GDP data, we need to load it into Python. We can do that using a library called pandas, which is a widely-used Python library for statistics, particularly on tabular data. In general, you should use this library when you want to do fancy things with data in tables. To tell Python that we’d like to start using pandas, we need to import it:

Importing a library is like getting a piece of lab equipment out of a storage locker and setting it up on the bench. Libraries provide additional functionality to the basic Python package, much like a new piece of equipment adds functionality to a lab space. Just like in the lab, importing too many libraries can sometimes complicate and slow down your programs - so we only import what we need for each program.

Additionally, it’s common to use an alias when importing a library to

safe some typing. In the case of pandas, the alias used is

pd. Therefore, the importing command would become:

Once we’ve imported the library, we can ask the library to read our data file for us:

OUTPUT

country 1952 1957 ... 1997 2002 2007

0 Australia 10039.59564 10949.64959 ... 26997.93657 30687.75473 34435.36744

1 New Zealand 10556.57566 12247.39532 ... 21050.41377 23189.80135 25185.00911

[2 rows x 13 columns]The expression pd.read_csv(...) is a function call that asks Python

to run the function

read_csv which belongs to the pandas library.

The dot notation in Python is used most of all as an object

attribute/property specifier or for invoking its method.

object.property will give you the object.property value,

object_name.method() will invoke on object_name method.

As an example, John Smith is the John that belongs to the Smith

family. We could use the dot notation to write his name

smith.john, just as read_csv is a function

that belongs to the pandas library.

pandas.read_csv accepts various parameters. So far we’ve used one

(we will see later about other parameters), the name of the file we want

to read. Note, that the file needs to be character strings (or strings for short), so we put them in

quotes.

Since we haven’t told it to do anything else with the function’s

output, the notebook displays it.

In this case, that output is the data we just loaded. By default, only a

few rows and columns are shown (with ... to omit elements

when displaying big tables). Additionally, pandas uses backslash

\ to show wrapped lines when output is too wide to fit the

screen.

Our call to pandas.read_csv read our file but didn’t

save the data in memory. To do that, we need to assign the output to a

variable. In a similar manner to how we assign a single value to a

variable, we can also assign the output of a function to a variable

using the same syntax. Let’s re-run pandas.read_csv and

save the returned data:

This statement doesn’t produce any output because we’ve assigned the

output to the variable data_oceania. If we want to check

that the data have been loaded, we can print the variable’s value:

OUTPUT

country 1952 1957 ... 1997 2002 2007

0 Australia 10039.59564 10949.64959 ... 26997.93657 30687.75473 34435.36744

1 New Zealand 10556.57566 12247.39532 ... 21050.41377 23189.80135 25185.00911

[2 rows x 13 columns]Now that the data are in memory, we can manipulate them. However,

notice that the row headings are numbers (0 and 1 in this case). It

would be ideal if we could refer to the rows by the country rather than

an arbitrary number (arbitrary in sense that in which that we don’t

really know how the file was compiled, either alphabetically orderd, GDP

of the first year in the list, …). To index by country, we need

to reload the dataframe passing a new argument to the

read_csv function.

PYTHON

data_oceania_country = pd.read_csv('data/gapminder_gdp_oceania.csv', index_col='country')

print(data_oceania_country)OUTPUT

1952 1957 ... 2002 2007

country ...

Australia 10039.59564 10949.64959 ... 30687.75473 34435.36744

New Zealand 10556.57566 12247.39532 ... 23189.80135 25185.00911

[2 rows x 12 columns]Note, that index_col also gets a string, in this case

the name of the column we want to use to define our index. Now, we can

refer to rows with names, similarly as we would do with the columns.

We’ve named the new variable as data_oceania_country.

This helps us to remember how we’ve loaded the data with which region

the data includes (oceania) and how it is indexed

(country).

Let’s ask what type of thing

data_oceania_country refers to:

OUTPUT

<class 'pandas.core.frame.DataFrame'>The output tells us that data_oceania_country currently

refers to a DataFrame, the functionality for which is provided by the

pandas library. Dataframe is how it’s normally referred tabular data

loaded with pandas, similar to one of the data structures provided by R

by default.

Data Type

A Dataframe may contain one or more elements of different types. The

type function will only tell you that a variable is a

pandas dataframe but won’t tell you the type of thing inside the

dataframe. We can find out the type of the data contained in the pandas

dataframe.

OUTPUT

1952 float64

1957 float64

1962 float64

1967 float64

1972 float64

1977 float64

1982 float64

1987 float64

1992 float64

1997 float64

2002 float64

2007 float64

dtype: objectThis tells us that the pandas dataframe’s elements are floating-point numbers.

With the following command, we can see some properties of our dataframe:

OUTPUT

<class 'pandas.core.frame.DataFrame'>

Index: 2 entries, Australia to New Zealand

Data columns (total 12 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 1952 2 non-null float64

1 1957 2 non-null float64

2 1962 2 non-null float64

3 1967 2 non-null float64

4 1972 2 non-null float64

5 1977 2 non-null float64

6 1982 2 non-null float64

7 1987 2 non-null float64

8 1992 2 non-null float64

9 1997 2 non-null float64

10 2002 2 non-null float64

11 2007 2 non-null float64

dtypes: float64(12)

memory usage: 208.0+ bytesWe see that there are two rows named 'Australia' and

'New Zealand'; that there are twelve columns, each of which

has two actual 64-bit floating point values (non-null values - null

values are used to represent missing data or observations); and that

it’s using 208 bytes of memory.

Whilst the info() method tells us how many columns our

dataframe has, it doesn’t tell us what the headers are.

Fortunately, DataFrames also have a columns variable, which

stores the column headers:

OUTPUT

Index(['1952', '1957', '1962', '1967', '1972', '1977', '1982', '1987', '1992',

'1997', '2002', '2007'],

dtype='object')As with dtype, we didn’t use parentheses when

writing data_oceania_country.columns. This is because

columns contains data, whereas info()

is a method (which displays some information). This is normally

called a member variable,

or just a member of the data_oceania_country

variable.

Sometimes, we might want to treat our columns as rows and vice versa. To do so, we can transpose our dataframe. Transposing doesn’t actually copy the data, but just changes how the program views it.

OUTPUT

country Australia New Zealand

1952 10039.59564 10556.57566

1957 10949.64959 12247.39532

1962 12217.22686 13175.67800

1967 14526.12465 14463.91893

1972 16788.62948 16046.03728

1977 18334.19751 16233.71770

1982 19477.00928 17632.41040

1987 21888.88903 19007.19129

1992 23424.76683 18363.32494

1997 26997.93657 21050.41377

2002 30687.75473 23189.80135

2007 34435.36744 25185.00911.T is short for Transpose.

Accessing data in a dataframe

The next question on our minds should be; “now that we’ve loaded our

data into Python, how do we select or access its values”? DataFrames

provide each row and column in our table of data with a label.

We saw that we can use the index_col parameter in

read_csv to specify the row labels, otherwise, pandas will

automatically assign our rows labels that started at 0 and

increased by 1.

We’ve load the data for Europe so that we have a larger dataset to work with.

We can now specify a row and column uniquely using the identifier of

an entry in the dataframe, together with the

DataFrame.loc method. If we want to extract the GDP per

capita value on the year 1952 for 'Albania' we can use the

row and column labels as:

OUTPUT

1601.056136Alternatively, we can think that underneath the labels for the rows

and columns, each entry also has an index [i, j]

(listed by [row_number, column_number]). The following

command, we can see the underneath array’s shape:

OUTPUT

(30, 12)The output tells us that the data_europe_country

dataframe variable contains 30 rows and 12 columns. This

shape is a members or

attribute as the dtypes and info. They provide

extra information describing data_europe_country in the

same way an adjective describes a noun.

data_europe_country.shape is an attribute of

data_europe_country which describes the dimensions of

data_europe_country. We use the same dotted notation for

the attributes of variables that we use for the functions in libraries

because they have the same part-and-whole relationship.

If we want to get a single number from the dataframe, we must provide

an index in square brackets after the

variable name, just as we do in math when referring to an element of a

matrix. In the case of pandas, we need to use either the

loc if using labels or iloc if using

indices.

Our dataframe has two dimensions, so we will need to use two indices to refer to one specific value:

OUTPUT

first value in the dataframe: 1601.056136OUTPUT

middle value in data: 11150.98113The expression data_europe_country.iloc[14, 5] accesses

the element at row 15, column 6. While this expression may not surprise

you, data_europe_country.iloc[0, 0] might. Programming

languages like Fortran, MATLAB and R start counting at 1 because that’s

what human beings have done for thousands of years. Languages in the C

family (including C++, Java, Perl, and Python) count from 0 because it

represents an offset from the first value in the array (the second value

is offset by one index from the first value). This is closer to the way

that computers represent arrays (if you are interested in the historical

reasons behind counting indices from zero, you can read Mike

Hoye’s blog post). As a result, if we have an M×N array in Python,

its indices go from 0 to M-1 on the first axis and 0 to N-1 on the

second. It takes a bit of getting used to, but one way to remember the

rule is that the index is how many steps we have to take from the start

to get the item we want.

Our data_europe_country dataframe is effectively storing

our entries as a grid, and keeps track of which labels correspond to

which index. This lets us interact with our data in a friendly and

human-readable way, as it is much easier to work with labels than

indices when handling tabular data! For instance, by indices we don’t

know to which country or year the value belongs to, we would need to

count the labels for the row and indices to find that the 15th row

refers to 'Ireland' and the 6th column to the

1977' label.

In the Corner

What may also surprise you is that when Python displays an array, it

shows the element with index [0, 0] in the upper left

corner rather than the lower left. This is consistent with the way

mathematicians draw matrices but different from the Cartesian

coordinates. The indices are (row, column) instead of (column, row) for

the same reason, which can be confusing when plotting data.

Selection using slices

We have seen that loc and iloc allow us to

select individual entries in our dataframe. However, they can also be

used to select a range of rows and columns whose entries we want to

retrieve.

For example, let’s say we wanted all the entries from

1957 through to 1987 for all the countries

beginning with “B” ('Belgium' through to

'Bulgaria'). We could access these entries via a slice:

PYTHON

# Slice using labels. Notice that, because a slice doesn't include the end value, we have to provide the label of the first column we don't want to include as the end value of our slice.

print(data_europe_country.loc['Belgium':'Bulgaria', '1957':'1987'])OUTPUT

1957 1962 ... 1982 1987

country ...

Belgium 9714.960623 10991.206760 ... 20979.845890 22525.563080

Bosnia and Herzegovina 1353.989176 1709.683679 ... 4126.613157 4314.114757

Bulgaria 3008.670727 4254.337839 ... 8224.191647 8239.854824We also don’t have to include the upper and lower bound on the slice. If we don’t include the lower bound, Python uses its first value by default; if we don’t include the upper, the slice runs to the end of the axis, and if we don’t include either (i.e., if we use ‘:’ on its own), the slice includes everything:

PYTHON

print('All countries before (and included) Belgium for years 1957 - 1967')

print(data_europe_country.loc[:'Belgium', '1957':'1967'])

print('All countries for the year 2002 till now')

print(data_europe_country.loc[:, '2002':])

print('All the years for Italy')

print(data_europe_country.loc['Italy', :])

print('All the countries for 1987')

print(data_europe_country.loc[:, '1987'])OUTPUT

ll countries before (and included) Belgium for years 1957 - 1967

1957 1962 1967

country

Albania 1942.284244 2312.888958 2760.196931

Austria 8842.598030 10750.721110 12834.602400

Belgium 9714.960623 10991.206760 13149.041190

All countries for the year 2002 till now

2002 2007

country

Albania 4604.211737 5937.029526

Austria 32417.607690 36126.492700

Belgium 30485.883750 33692.605080

... ... ...

Switzerland 34480.957710 37506.419070

Turkey 6508.085718 8458.276384

United Kingdom 29478.999190 33203.261280

All the years for Italy

1952 4931.404155

1957 6248.656232

1962 8243.582340

... ...

1997 24675.024460

2002 27968.098170

2007 28569.719700

Name: Italy, dtype: float64

All the countries for 1987

country

Albania 3738.932735

Austria 23687.826070

Belgium 22525.563080

... ...

Switzerland 30281.704590

Turkey 5089.043686

United Kingdom 21664.787670

Name: 1987, dtype: float64When using indices to slice (i.e., with .iloc), you need

to be aware that the slice

0:4 means, “Start at index 0 and go up to, but not

including, index 4”. Again, the up-to-but-not-including takes a bit of

getting used to, but the rule is that the difference between the upper

and lower bounds is the number of values in the slice.

PYTHON

print('First four countries and first three years')

print(data_europe_country.iloc[0:4, 0:3])OUTPUT

First four countries and first three years

1952 1957 1962

country

Albania 1601.056136 1942.284244 2312.888958

Austria 6137.076492 8842.598030 10750.721110

Belgium 8343.105127 9714.960623 10991.206760

Bosnia and Herzegovina 973.533195 1353.989176 1709.683679As when using labels, you can omit the lower, upper or both boundaries of the slice.

PYTHON

print('First the last three countries for the first three years')

print(data_europe_country.iloc[27:, :3])OUTPUT

First the last three countries for the first three years

1952 1957 1962

country

Switzerland 14734.232750 17909.489730 20431.092700

Turkey 1969.100980 2218.754257 2322.869908

United Kingdom 9979.508487 11283.177950 12477.177070Extent of Slicing

- Do the two statements below produce the same output?

- Based on this, what rule governs what is included (or not) in

numerical slices (using

iloc) and named slices (usingloc) in Pandas?

No, they do not produce the same output! The output of the first statement is:

OUTPUT

1952 1957

country

Albania 1601.056136 1942.284244

Austria 6137.076492 8842.598030The second statement gives:

OUTPUT

1952 1957 1962

country

Albania 1601.056136 1942.284244 2312.888958

Austria 6137.076492 8842.598030 10750.721110

Belgium 8343.105127 9714.960623 10991.206760Clearly, the second statement produces an additional column and an

additional row compared to the first statement. What conclusion can we

draw? We see that a numerical slice (slicing indices), 0:2,

omits the final index (i.e. index 2) in the range provided,

while a named slice, '1952':'1962', includes the

final element.

Reading Other Data

Read the data in gapminder_gdp_americas.csv (which

should be in the same directory as

gapminder_gdp_oceania.csv) into a variable called

data_americas_country.

Determine how many rows and columns this data has. Hint: try printing

out the value of the .shape member variable once you load

your dataframe!

To read in a CSV, we use pd.read_csv and pass the

filename 'data/gapminder_gdp_americas.csv' to it. We also

once again pass the column name 'country' to the parameter

index_col in order to index by country.

To determine how many rows and columns this dataframe has, we could

use info like we did before:

PYTHON

data_americas_country = pd.read_csv('data/gapminder_gdp_americas.csv', index_col='country')

data_americas_country.info()OUTPUT

<class 'pandas.core.frame.DataFrame'>

Index: 25 entries, Argentina to Venezuela

Data columns (total 13 columns):

# Column Non-Null Count Dtype

--- ------ -------------- -----

0 1952 25 non-null float64

1 1957 25 non-null float64

2 1962 25 non-null float64

3 1967 25 non-null float64

4 1972 25 non-null float64

5 1977 25 non-null float64

6 1982 25 non-null float64

7 1987 25 non-null float64

8 1992 25 non-null float64

9 1997 25 non-null float64

10 2002 25 non-null float64

11 2007 25 non-null float64

dtypes: float64(12), object(1)

memory usage: 2.5+ KBWe can see that we have 25 entries (rows), and 13 columns. We could

also get the same information about the number of rows and columns using

shape:

OUTPUT

(25, 12)Mystery Functions in IPython

How did we know what functions NumPy has and how to use them? If you

are working in IPython or in a Jupyter Notebook, there is an easy way to

find out. If you type the name of something followed by a dot, then you

can use tab completion

(e.g. type data_europe_country. and then press

Tab) to see a list of all functions and attributes that you

can use. After selecting one, you can also add a question mark

(e.g. data_europe_country.cumsum?), and IPython will return

an explanation of the method! This is the same as doing

help(data_europe_country.cumsum). Similarly, if you are

using the “plain vanilla” Python interpreter, you can type

data_europe_country. and press the Tab key twice

for a listing of what is available. You can then use the

help() function to see an explanation of the function

you’re interested in, for example:

help(data_europe_country.cumsum).

Inspecting Data

After reading the data for the Americas, use

help(data_americas_country.head) and

help(data_americas_country.tail) to find out what

DataFrame.head and DataFrame.tail do.

- What method call will display the first three rows of this data?

- What method call will display the last three columns of this data? (Hint: you may need to change your view of the data.)

- We can check out the first five rows of

data_americas_countryby executingdata_americas_country.head()which lets us view the beginning of the dataframe. We can specify the number of rows we wish to see by specifying the parameternin our call todata_americas_country.head(). To view the first three rows, execute:

OUTPUT

1952 1957 ... 2002 2007

country ...

Argentina 3758.523437 4245.256698 ... 53731.890130 38648.379084

Bolivia 3112.363948 61729.977564 ... 2474.548819 2749.320965

Brazil 52526.828538 52271.715538 ... 45726.614039 7006.580419- To check out the last three rows of

data_americas_country, we would use the command,data_americas_country.tail(n=3), analogous tohead()used above. However, here we want to look at the last three columns so we need to change our view and then usetail(). To do so, we create a new dataframe in which rows and columns are switched:

We can then view the last three columns of

data_americas_country by viewing the last three rows of

americas_flipped:

OUTPUT

country Argentina Bolivia ... Uruguay Venezuela

1997 5838.347657 2253.023004 ... 9230.240708 5154.825496

2002 53731.890130 2474.548819 ... 7727.002004 50742.767364

2007 38648.379084 2749.320965 ... 10611.462990 5728.353514This shows the data that we want, but we may prefer to display three columns instead of three rows, so we can flip it back:

Note: we could have done the above in a single line of code by ‘chaining’ the commands:

Not All Functions Have Input

Generally, a function uses inputs to produce outputs. However, some functions produce outputs without needing any input. For example, checking the current time doesn’t require any input.

OUTPUT

Sat Mar 26 13:07:33 2016For functions that don’t take in any arguments, we still need

parentheses (()) to tell Python to go and do something for

us.

Slicing Strings

A section of an array is called a slice. We can take slices of character strings as well:

PYTHON

element = 'oxygen'

print('first three characters:', element[0:3])

print('last three characters:', element[3:6])OUTPUT

first three characters: oxy

last three characters: genWhat is the value of element[:4]? What about

element[4:]? Or element[:]?

OUTPUT

oxyg

en

oxygenSlicing Strings (continued)

What is element[-1]? What is

element[-2]?

OUTPUT

n

eSlicing Strings (continued)

Given those answers, explain what element[1:-1]

does.

Creates a substring from index 1 up to (not including) the final index, effectively removing the first and last letters from ‘oxygen’

Slicing Strings (continued)

How can we rewrite the slice for getting the last three characters of

element, so that it works even if we assign a different

string to element? Test your solution with the following

strings: carpentry, clone,

hi.

PYTHON

element = 'oxygen'

print('last three characters:', element[-3:])

element = 'carpentry'

print('last three characters:', element[-3:])

element = 'clone'

print('last three characters:', element[-3:])

element = 'hi'

print('last three characters:', element[-3:])OUTPUT

last three characters: gen

last three characters: try

last three characters: one

last three characters: hiThin Slices

The expression element[3:3] produces an empty string, i.e., a string that

contains no characters. If data_europe_country holds our

array of europe data, what does

data_europe_country.iloc[5:5, 4:4] produce? What about

data_europe_country.iloc[3:3, :]?

OUTPUT

Empty DataFrame

Columns: []

Index: []

Empty DataFrame

Columns: [1952, 1957, 1962, 1967, 1972, 1977, 1982, 1987, 1992, 1997, 2002, 2007]

Index: []Key Points

- Import a library into a program using

import libraryname. - Use the

pandaslibrary to work with tabular data in Python. - Use the

read_csvfunction to load data into a dataframe variable. - Use

index_colto specify that a column’s values should be used as row headings. - Use

infoto find out basic information about a dataframe. - Use slices and

locto extract entries from a dataframe. - The expression

dataframe.shapegives the shape of the underlying array. - Use

label_a:label_cto specify aslicethat includes the rows or columns fromlabel_ato, and including,label_c. - Array indices start at 0, not 1.

- Use

low:highto specify aslicethat includes the indices fromlowtohigh-1. - Use

# some kind of explanationto add comments to programs.

Content from Visualizing Tabular Data

Last updated on 2024-02-23 | Edit this page

Estimated time: 60 minutes

Overview

Questions

- How can I visualize tabular data in Python?

- How can I group several plots together?

Objectives

- Plot simple graphs from data.

- Plot multiple graphs in a single figure.

Visualizing data

The mathematician Richard Hamming once said, “The purpose of

computing is insight, not numbers,” and the best way to develop insight

is often to visualize data. Visualization deserves an entire lecture of

its own, but we can explore a few features of Python’s

matplotlib library here. While there is no official

plotting library, matplotlib is the de facto

standard.

Episode Prerequisites

Countries are grouped into files by continent. Each country has its Gross Domestic Product (GDP) per capita (population) recorded in 5 year intervals from 1952 to 2007.

Dataframes have a .plot() method which we can use to

produce a line-plot of the data contained within the frame.

This has placed all of our data into a single plot that Python has

then displayed to us - clearly, there is far too much here for us to

take in! You might notice that Pandas has assumed we want to use the row

labels as the dependant variable for our plot, and the column headers as

the line-labels. In our case however, we want to display the GDP per

capital over time, using one line for each country. We can fix these

problems by combining two of the methods we saw in the previous episode;

- We can use an (index) slice to take only the first 5 countries, for

example. - We can transpose our dataframe to reverse the roles of our

rows and columns, so plot uses the columns as the dependant

variable and the rows as the line labels.

Computing statistics across dataframe axes

Let’s begin our analysis of the data by plotting the average GDP

across Europe, as a function of time. Pandas dataframes have a built-in

function, mean() that we can use to help us here:

OUTPUT

1952 5661.057435

1957 6963.012816

1962 8365.486814

1967 10143.823757

1972 12479.575246

1977 14283.979110

1982 15617.896551

1987 17214.310727

1992 17061.568084

1997 19076.781802

2002 21711.732422

2007 25054.481636

dtype: float64You’ll notice that Pandas has assumed (again) that we want to get the mean for each year, or to “take the mean GDP down the columns”. However it may also be useful to know the average GDP for each country - in which case we want to take the average “along the rows” instead.

We could use the transpose method to reverse the roles of our rows

and columns like we did before, and then take the average.

Alternatively, mean() (and many other dataframe functions)

take an optional parameter called axis which lets us

specify which axis of the dataframe (rows or columns) to take the

average along.

Using the axis keyword, we can retrieve the average GDP

for each country by taking the average value across the columns

(by setting the argument axis='columns' to the

mean method):

OUTPUT

country

Albania 3255.366633

Austria 20411.916279

Belgium 19900.758072

... ...

Switzerland 27074.334405

Turkey 4469.453380

United Kingdom 19380.472986

dtype: float64Plotting statistics

The plot method called directly from our dataframe is

implicitly using matplotlib’s pyplot.plot

function. Whilst calling plot directly from a dataset can

be helpful to get a quick visual glimpse of the data, most of the time

we will want to manipulate our data in some way and plot some

significant statistics or derived values, rather than the raw data

itself. We will use the matplotlib library to manage and

create plots ourselves from here on. As with any library, we must first

tell Python to import it:

We can now create a plot of the average GDP of European countries in the following way:

PYTHON

fig = plt.figure()

mean_gdp_each_year = data_eu.mean(axis='rows')

plt.plot(mean_gdp_each_year)

plt.show())

Let’s break down what each line is doing.

First, we use the figure function from the

matplotlib.pyplot library to create a new, blank figure

canvas. The variable fig can be used to access this figure

canvas.

Then, we create a new variable mean_gdp_each_year, with

the average value of our data_eu dataframe across the rows

(down the columns).

Next, using the plot function from the

matplotlib.pyplot library, we request to visualise the data

stored in mean_gdp_each_year into the figure canvas. If we

had multiple figures open, we could specify which one to plot this data

on. But since we only have one (fig), plt.plot

knows to plot the data onto this one. Finally, plt.show()

function displays the final result on the screen.

Grouping Plots

So far, matplotlib’s plot hasn’t done much more than

dataframe’s plot function did - but that changes now. It is

often the case where we will want to display multiple statistics

side-by-side, or the same statistic from multiple datasets

simultaneously for comparison purposes. This can be achieved by adding

subplots to a figure, using the add_subplots

function. Let’s demonstrate how to do this by plotting the maximum and

minimum GDP of countries in Europe for each year alongside the average

GDP for that year.

To achieve this we will need: 1. to compute the min, max, and average GDP each year for European countries; 1. Create a new figure with the right canvas proportions; 1. Generate different subplots (“axes”) where to plot the data; and 1. Display the data in the screen.

PYTHON

eu_min_data = data_eu.min(axis='rows')

eu_max_data = data_eu.max(axis='rows')

eu_avg_data = data_eu.mean(axis='rows')

fig = plt.figure(figsize=(10., 3.))

axes_1 = fig.add_subplot(1, 3, 1)

axes_1.plot(eu_min_data)

axes_2 = fig.add_subplot(1, 3, 2)

axes_2.plot(eu_max_data)

axes_3 = fig.add_subplot(1, 3, 3)

axes_3.plot(eu_avg_data)

plt.show()Note how we’ve set the right axis arguments when computing the

different statistical properties (axis='rows'). The

parameter figsize tells Python how big to make this space

in relative units. In this case the width is a bit larger than three

times the height. Each subplot is placed into the figure using its

add_subplot method. The

add_subplot method takes 3 parameters. The first denotes

how many total rows of subplots there are, the second parameter refers

to the total number of subplot columns, and the final parameter denotes

which subplot your variable is referencing (left-to-right,

top-to-bottom). Each subplot is stored in a different variable

(axes_1, axes_2, axes_3). Once a

subplot is created, the axes can be used to place the desired plot for

each.

min and max

methods

The min and max functions can be used on a

dataframe in the same way as the mean function, and take

the same axis parameter. For us, this retrieves the minimum

GDP of countries in Europe for each year:

OUTPUT

1952 973.533195

1957 1353.989176

1962 1709.683679

1967 2172.352423

1972 2860.169750

1977 3528.481305

1982 3630.880722

1987 3738.932735

1992 2497.437901

1997 3193.054604

2002 4604.211737

2007 5937.029526

dtype: float64Adding labels

Just because we have plotted some statistics doesn’t mean our plot is complete! - There are no axis labels telling us what each subplot is showing us. - There’s no title for the plot. - There’s a lot of whitespace (empty space) surrounding our plot, and between our subplots.

We can fix these using some more matplotlib functions. -

The set_ylabel method lets us add a label for the y-axis of

any plot or subplot, using dot notation. - The set_title

method lets us add a title to a subplot. - The suptitle

method lets us add a title to the figure window (“super”-title). - The

tight_layout method tells matplotlib to remove

as much whitespace as possible from our figure.

Putting it all together:

PYTHON

eu_min_data = data_eu.min(axis='rows')

eu_max_data = data_eu.max(axis='rows')

eu_avg_data = data_eu.mean(axis='rows')

fig = plt.figure(figsize=(10., 3.))

axes_1 = fig.add_subplot(1, 3, 1)

axes_1.plot(eu_min_data)

axes_1.set_ylabel('GDP/capita')

axes_1.set_title('Min')

axes_2 = fig.add_subplot(1, 3, 2)

axes_2.plot(eu_max_data)

axes_2.set_ylabel('GDP/capita')

axes_1.set_title('Max')

axes_3 = fig.add_subplot(1, 3, 3)

axes_3.plot(eu_avg_data)

axes_3.set_ylabel('GDP/capita')

axes_1.set_title('Average')

fig.suptitle('GDP/capita statistics for European countries')

fig.tight_layout()

plt.show()Setting limits for the axes

You might have noticed that our subplots leave a little bit of space between our line and the edges of the subplot itself, which is a result of the range of the y-axis being slightly bigger than the maximum and minimum range of the data we are plotting.

Can you figure out a way to manually set the range of the y-axis, to remove this white space?

Hint: - Try using the set_ylim(min_value, max_value)

method on the subplots. - Try using the max() and

min() methods on the eu_min_data

variables.

To fix this for the first subplot, we can set the y-axis limits to the overall minimum and maximum values of the dataframe.

PYTHON

axes_1 = fig.add_subplot(1, 3, 1)

axes_1.plot(eu_min_data)

axes_1.set_ylabel('GDP/capita')

axes_1.set_title('Min')

# Sets the y-limits to the min/max overall values to ease comparisson across the plots

y_axes_min_value = eu_min_data.min()

y_axes_max_value = eu_max_data.max()

axes_1.set_ylim(y_axes_min_value, y_axes_max_value)Or without creating intermediate variables, you could use

instead of the last three lines!

Drawstyles

The plot method doesn’t just draw straight, blue lines -

it can be customised with some optional parameters.

Modify your calls to plot with different parameters to

create different line styles in each of the three subplots. Some useful

parameters to add to plot are: -

linestyle = ':'. Can also be tried with '--',

'-.', and a few other options. -

color = 'red'. Several other colours are also available! -

marker = 'x'. There are lots of

different plotting markers to try out.

Make Your Own Plot

Create a plot showing the standard deviation of the GDP/captia for each year.

Hint: - Try using the std method on

data_eu.

Moving Plots Around

Modify the program to display the three plots on top of one another instead of side by side.

PYTHON

eu_min_data = data_eu.min(axis='rows')

eu_max_data = data_eu.max(axis='rows')

eu_avg_data = data_eu.mean(axis='rows')

fig = plt.figure(figsize=(10., 3.))

axes_1 = fig.add_subplot(3, 1, 1)

axes_1.plot(eu_min_data)

axes_1.set_ylabel('GDP/capita')

axes_1.set_title('Min')

axes_2 = fig.add_subplot(3, 1, 2)

axes_2.plot(eu_max_data)

axes_2.set_ylabel('GDP/capita')

axes_1.set_title('Max')

axes_3 = fig.add_subplot(3, 1, 3)

axes_3.plot(eu_avg_data)

axes_3.set_ylabel('GDP/capita')

axes_1.set_title('Average')

fig.suptitle('GDP/capita statistics for European countries')

fig.tight_layout()

plt.show()Moving Plots Around (continued)

What would you change to make each of these plots of a similar width than when they were side by side?

Change In GDP

The GDP data is longitudinal in the sense that each row represents a series of observations relating to one country. This means that the change in GDP over time is a meaningful concept. Let’s find out how to calculate changes in the data contained in an array with pandas.

The DataFrame.diff() function takes an array and returns

the differences between two successive values, depending of the axis

requested.

Let’s use it to examine the changes each year across the ages for Portugal.

OUTPUT

1952 3068.319867

1957 3774.571743

1962 4727.954889

... ...

1997 17641.031560

2002 19970.907870

2007 20509.647770

Name: Portugal, dtype: float64Calling portugal.diff() would do the following

calculations

PYTHON

[ 3068.31 - NaN, 3774.57 - 3068.31, 4727.95 - 3774.57, ..., 19970.90 - 17641.03, 20509.64 - 19970.90 ]and return the 12 difference values in a new series.

OUTPUT

1952 NaN

1957 706.251876

1962 953.383146

... ...

1997 1433.764930

2002 2329.876310

2007 538.739900

Name: Portugal, dtype: float64Note that the first value is NaN because can’t substract a value to the first element.

When calling DataFrame.diff with a 2-dimensional

dataframe, an axis argument may be passed to the function

to specify which axis to process. When applying

DataFrame.diff to our 2D GDP dataframe, which axis would we

specify to obtain differences between the same country?

Change In GDP (continued)

How would you find the largest change in GDP for each country? Does it matter if the change in inflammation is an increase or a decrease?

By using the DataFrame.max() function after you apply

the Dataframe.diff() function, you will get the largest

difference between days.

OUTPUT

country

Albania 1411.157133

Austria 3827.023200

Belgium 3523.102370

... ...

Switzerland 4228.968720

Turkey 1950.190666

United Kingdom 3724.262090

dtype: float64If GDP values decrease along an axis, then the difference

from one element to the next will be negative. If you are interested in

the magnitude of the change and not the direction, the

DataFrame.abs() function will provide that.

Notice the difference if you get the largest absolute difference between readings.

OUTPUT

country

Albania 1411.157133

Austria 3827.023200

Belgium 3523.102370

... ...

Switzerland 4228.968720

Turkey 1950.190666

United Kingdom 3724.262090

dtype: float64Key Points

- Use the

pyplotmodule from thematplotliblibrary to create visualizations of data. - Dataframes have methods like

min,max, andmeanto compute statistics along either the rows or the columns. - Use

axisargument in statistic functions to calculate the values across the specified axis. - We can use

add_subplotto create multiple plots in a single figure. - We can customise the labels, axis ranges, line styles, and more of

our plots using

matplotlib.

Content from Storing Multiple Values in Lists

Last updated on 2024-02-23 | Edit this page

Estimated time: 45 minutes

Overview

Questions

- How can I store many values together?

Objectives

- Explain what a list is.

- Create and index lists of simple values.

- Change the values of individual elements

- Append values to an existing list

- Reorder and slice list elements

- Create and manipulate nested lists

In the previous episode, we analysed a single .csv data

file containing GDP data for countries in Europe. However we also have

similar data for the other continents, and we would like to repeat our

analysis on each of them. This means that we still have 5 more data

files to process!

The natural first step is to collect the names of all the files that we have to process. In Python, a list is a way to store multiple values together. In this episode, we will learn how to store multiple values in a list as well as how to work with lists.

Python lists

Unlike dataframes, lists are built into the language so we do not have to load a library to use them. We create a list by putting values inside square brackets and separating the values with commas:

OUTPUT

odds are: [1, 3, 5, 7]We can access elements of a list using indices – numbered positions of elements in the list. These positions are numbered starting at 0, so the first element has an index of 0.

PYTHON

print('first element:', odds[0])

print('last element:', odds[3])

print('"-1" element:', odds[-1])OUTPUT

first element: 1

last element: 7

"-1" element: 7Yes, we can use negative numbers as indices in Python. When we do so,

the index -1 gives us the last element in the list,

-2 the second to last, and so on. Because of this,

odds[3] and odds[-1] point to the same element

here.

There is one important difference between lists and strings: we can change the values in a list, but we cannot change individual characters in a string. For example:

PYTHON

names = ['Curie', 'Darwing', 'Turing'] # typo in Darwin's name

print('names is originally:', names)

names[1] = 'Darwin' # correct the name

print('final value of names:', names)OUTPUT

names is originally: ['Curie', 'Darwing', 'Turing']

final value of names: ['Curie', 'Darwin', 'Turing']works, but:

ERROR

---------------------------------------------------------------------------

TypeError Traceback (most recent call last)

<ipython-input-8-220df48aeb2e> in <module>()

1 name = 'Darwin'

----> 2 name[0] = 'd'

TypeError: 'str' object does not support item assignmentdoes not.

Ch-Ch-Ch-Ch-Changes

Data which can be modified in place is called mutable, while data which cannot be modified is called immutable. Strings and numbers are immutable. This does not mean that variables with string or number values are constants, but when we want to change the value of a string or number variable, we can only replace the old value with a completely new value.

Lists on the other hand, are mutable: we can modify them after they have been created. We can change individual elements, append new elements, or reorder the whole list. For some operations, like sorting, we can choose whether to use a function that modifies the data in-place or a function that returns a modified copy and leaves the original unchanged.

Be careful when modifying data in-place. If two variables refer to the same list, and you modify the list value, it will change for both variables!

PYTHON

mild_salsa = ['peppers', 'onions', 'cilantro', 'tomatoes']

hot_salsa = mild_salsa # <-- mild_salsa and hot_salsa point to the *same* list data in memory

hot_salsa[0] = 'hot peppers'

print('Ingredients in mild salsa:', mild_salsa)

print('Ingredients in hot salsa:', hot_salsa)OUTPUT

Ingredients in mild salsa: ['hot peppers', 'onions', 'cilantro', 'tomatoes']

Ingredients in hot salsa: ['hot peppers', 'onions', 'cilantro', 'tomatoes']If you want variables with mutable values to be independent, you must make a copy of the value when you assign it.

PYTHON

mild_salsa = ['peppers', 'onions', 'cilantro', 'tomatoes']

hot_salsa = list(mild_salsa) # <-- makes a *copy* of the list

hot_salsa[0] = 'hot peppers'

print('Ingredients in mild salsa:', mild_salsa)

print('Ingredients in hot salsa:', hot_salsa)OUTPUT

Ingredients in mild salsa: ['peppers', 'onions', 'cilantro', 'tomatoes']

Ingredients in hot salsa: ['hot peppers', 'onions', 'cilantro', 'tomatoes']Because of pitfalls like this, code which modifies data in place can be more difficult to understand. However, it is often far more efficient to modify a large data structure in place than to create a modified copy for every small change. You should consider both of these aspects when writing your code.

Nested Lists

Since a list can contain any Python variables, it can even contain other lists.

For example, you could represent the products on the shelves of a

small grocery shop as a nested list called veg:

To store the contents of the shelf in a nested list, you write it this way:

PYTHON

veg = [['lettuce', 'lettuce', 'peppers', 'zucchini'],

['lettuce', 'lettuce', 'peppers', 'zucchini'],

['lettuce', 'cilantro', 'peppers', 'zucchini']]Here are some visual examples of how indexing a list of lists

veg works. First, you can reference each row on the shelf

as a separate list. For example, veg[2] represents the

bottom row, which is a list of the baskets in that row.

![veg is now shown as a list of three rows, with veg[0] representing the top row of three baskets, veg[1] representing the second row, and veg[2] representing the bottom row.](../fig/04_groceries_veg0.png)

Index operations using the image would work like this:

OUTPUT

['lettuce', 'cilantro', 'peppers', 'zucchini']OUTPUT

['lettuce', 'lettuce', 'peppers', 'zucchini']To reference a specific basket on a specific shelf, you use two indexes. The first index represents the row (from top to bottom) and the second index represents the specific basket (from left to right).

![veg is now shown as a two-dimensional grid, with each basket labelled according to its index in the nested list. The first index is the row number and the second index is the basket number, so veg[1][3] represents the basket on the far right side of the second row (basket 4 on row 2): zucchini](../fig/04_groceries_veg00.png)

OUTPUT

'lettuce'OUTPUT

'peppers'There are many ways to change the contents of lists besides assigning new values to individual elements:

OUTPUT

odds after adding a value: [1, 3, 5, 7, 11]PYTHON

removed_element = odds.pop(0)

print('odds after removing the first element:', odds)

print('removed_element:', removed_element)OUTPUT

odds after removing the first element: [3, 5, 7, 11]

removed_element: 1OUTPUT

odds after reversing: [11, 7, 5, 3]While modifying in place, it is useful to remember that Python treats lists in a slightly counter-intuitive way.

As we saw earlier, when we modified the mild_salsa list

item in-place, if we make a list, (attempt to) copy it and then modify

this list, we can cause all sorts of trouble. This also applies to

modifying the list using the above functions:

PYTHON

odds = [3, 5, 7]

primes = odds

primes.append(2)

print('primes:', primes)

print('odds:', odds)OUTPUT

primes: [3, 5, 7, 2]

odds: [3, 5, 7, 2]This is because Python stores a list in memory, and then can use

multiple names to refer to the same list. If all we want to do is copy a

(simple) list, we can again use the list function, so we do

not modify a list we did not mean to:

PYTHON

odds = [3, 5, 7]

primes = list(odds)

primes.append(2)

print('primes:', primes)

print('odds:', odds)OUTPUT

primes: [3, 5, 7, 2]

odds: [3, 5, 7]Subsets of lists and strings can be accessed by specifying ranges of

values in brackets, similar to how we accessed ranges of entries in a

dataframe via the .iloc function. This is commonly referred

to as “slicing” the list/string.

PYTHON

binomial_name = 'Drosophila melanogaster'

group = binomial_name[0:10]

print('group:', group)

species = binomial_name[11:23]

print('species:', species)

chromosomes = ['X', 'Y', '2', '3', '4']

autosomes = chromosomes[2:5]

print('autosomes:', autosomes)

last = chromosomes[-1]

print('last:', last)OUTPUT

group: Drosophila

species: melanogaster

autosomes: ['2', '3', '4']

last: 4Slicing From the End

Use slicing to access only the last four characters of a string or entries of a list.

PYTHON

string_for_slicing = 'Observation date: 02-Feb-2013'

list_for_slicing = [['fluorine', 'F'],

['chlorine', 'Cl'],

['bromine', 'Br'],

['iodine', 'I'],

['astatine', 'At']]OUTPUT

'2013'

[['chlorine', 'Cl'], ['bromine', 'Br'], ['iodine', 'I'], ['astatine', 'At']]Would your solution work regardless of whether you knew beforehand the length of the string or list (e.g. if you wanted to apply the solution to a set of lists of different lengths)? If not, try to change your approach to make it more robust.

Hint: Remember that indices can be negative as well as positive

Non-Continuous Slices

So far we’ve seen how to use slicing to take single blocks of successive entries from a sequence. But what if we want to take a subset of entries that aren’t next to each other in the sequence?

You can achieve this by providing a third argument to the range within the brackets, called the step size. The example below shows how you can take every third entry in a list:

PYTHON

primes = [2, 3, 5, 7, 11, 13, 17, 19, 23, 29, 31, 37]

subset = primes[0:12:3]

print('subset', subset)OUTPUT

subset [2, 7, 17, 29]Notice that the slice taken begins with the first entry in the range, followed by entries taken at equally-spaced intervals (the steps) thereafter. If you wanted to begin the subset with the third entry, you would need to specify that as the starting point of the sliced range:

PYTHON

primes = [2, 3, 5, 7, 11, 13, 17, 19, 23, 29, 31, 37]

subset = primes[2:12:3]

print('subset', subset)OUTPUT

subset [5, 13, 23, 37]Use the step size argument to create a new string that contains only every other character in the string “In an octopus’s garden in the shade”. Start with creating a variable to hold the string:

What slice of beatles will produce the following output

(i.e., the first character, third character, and every other character

through the end of the string)?

OUTPUT

I notpssgre ntesaeIf you want to take a slice from the beginning of a sequence, you can omit the first index in the range:

PYTHON

date = 'Monday 4 January 2016'

day = date[0:6]

print('Using 0 to begin range:', day)

day = date[:6]

print('Omitting beginning index:', day)OUTPUT

Using 0 to begin range: Monday

Omitting beginning index: MondayAnd similarly, you can omit the ending index in the range to take a slice to the very end of the sequence:

PYTHON

months = ['jan', 'feb', 'mar', 'apr', 'may', 'jun', 'jul', 'aug', 'sep', 'oct', 'nov', 'dec']

sond = months[8:12]

print('With known last position:', sond)

sond = months[8:len(months)]

print('Using len() to get last entry:', sond)

sond = months[8:]

print('Omitting ending index:', sond)OUTPUT

With known last position: ['sep', 'oct', 'nov', 'dec']

Using len() to get last entry: ['sep', 'oct', 'nov', 'dec']

Omitting ending index: ['sep', 'oct', 'nov', 'dec']Overloading

+ usually means addition, but when used on strings or

lists, it means “concatenate”. Given that, what do you think the

multiplication operator * does on lists? In particular,

what will be the output of the following code?

[2, 4, 6, 8, 10, 2, 4, 6, 8, 10][4, 8, 12, 16, 20][[2, 4, 6, 8, 10],[2, 4, 6, 8, 10]][2, 4, 6, 8, 10, 4, 8, 12, 16, 20]

The technical term for this is operator overloading: a

single operator, like + or *, can do different

things depending on what it’s applied to.

Key Points

-

[value1, value2, value3, ...]creates a list. - Lists can contain any Python object, including lists (i.e., list of lists).

- Lists are indexed and sliced with square brackets (e.g., list\[0\] and list\[2:9\]), in the same way as strings and arrays.

- Lists are mutable (i.e., their values can be changed in place).

- Strings are immutable (i.e., the characters in them cannot be changed).

Content from Repeating Actions with Loops

Last updated on 2024-02-23 | Edit this page

Estimated time: 30 minutes

Overview

Questions

- How can I do the same operations on many different values?

Objectives

- Explain what a

forloop does. - Correctly write

forloops to repeat simple calculations. - Trace changes to a loop variable as the loop runs.

- Trace changes to other variables as they are updated by a

forloop.

In the episode about visualizing data, we wrote Python code that

plots values of interest from our first dataset

(gapminder_gdp_europe.csv).

We still have four more datasets to perform our analysis over, and we’ll want to create plots for all of our data sets. Preferably, we’d do this with a single statement, and to do that, we’ll have to teach the computer how to repeat things.

An example task that we might want to repeat is accessing numbers in a list, which we will do by printing each number on a line of its own.

In Python, a list is basically an ordered collection of elements, and

every element has a unique number associated with it — its index. This

means that we can access elements in a list using their indices. For

example, we can get the first number in the list odds, by

using odds[0]. One way to print each number is to use four

print statements:

OUTPUT

1

3

5

7This is a bad approach for three reasons:

Not scalable. Imagine you need to print a list that has hundreds of elements. It might be easier to type them in manually.

Difficult to maintain. If we want to decorate each printed element with an asterisk or any other character, we would have to change four lines of code. While this might not be a problem for small lists, it would definitely be a problem for longer ones.

Fragile. If we use it with a list that has more elements than what we initially envisioned, it will only display part of the list’s elements. A shorter list, on the other hand, will cause an error because it will be trying to display elements of the list that do not exist.

OUTPUT

1

3

5ERROR

---------------------------------------------------------------------------

IndexError Traceback (most recent call last)

<ipython-input-3-7974b6cdaf14> in <module>()

3 print(odds[1])

4 print(odds[2])

----> 5 print(odds[3])

IndexError: list index out of rangeHere’s a better approach: a for loop

OUTPUT

1

3

5

7This is shorter — certainly shorter than something that prints every number in a hundred-number list — and more robust as well:

OUTPUT

1

3

5

7

9

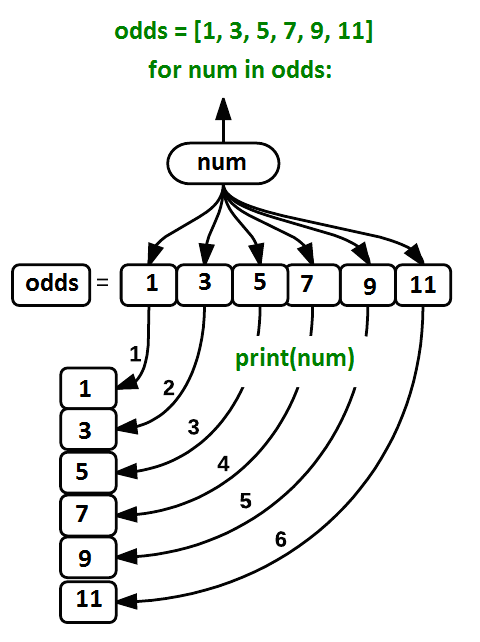

11The improved version uses a for loop to repeat an operation — in this case, printing — once for each thing in a sequence. The general form of a loop is:

Using the odds example above, the loop might look like this:

Each number (num) in the variable odds is

looped through and printed one number after another. The other numbers

in the diagram denote which loop cycle the number was printed in (1

being the first loop cycle, and 6 being the final loop cycle).

We can call the loop

variable anything we like, but there must be a colon at the end of

the line starting the loop, and we must indent anything we want to run

inside the loop. Unlike many other languages, there is no command to

signify the end of the loop body (e.g. end for); everything

indented after the for statement belongs to the loop.

What’s in a name?

In the example above, the loop variable was given the name

num as a mnemonic; it is short for ‘number’. We can choose

any name we want for variables. We might just as easily have chosen the

name banana for the loop variable, as long as we use the

same name when we invoke the variable inside the loop:

OUTPUT

1

3

5

7

9

11It is a good idea to choose variable names that are meaningful, otherwise it would be more difficult to understand what the loop is doing.

Here’s another loop that repeatedly updates a variable:

PYTHON

length = 0

names = ['Curie', 'Darwin', 'Turing']

for value in names:

length = length + 1

print('There are', length, 'names in the list.')OUTPUT

There are 3 names in the list.It’s worth tracing the execution of this little program step by step.

Since there are three names in names, the statement on line

4 will be executed three times. The first time around,

length is zero (the value assigned to it on line 1) and

value is Curie. The statement adds 1 to the

old value of length, producing 1, and updates

length to refer to that new value. The next time around,

value is Darwin and length is 1,

so length is updated to be 2. After one more update,

length is 3; since there is nothing left in

names for Python to process, the loop finishes and the

print function on line 5 tells us our final answer.

Note that a loop variable is a variable that is being used to record progress in a loop. It still exists after the loop is over, and we can re-use variables previously defined as loop variables as well:

PYTHON

name = 'Rosalind'

for name in ['Curie', 'Darwin', 'Turing']:

print(name)

print('after the loop, name is', name)OUTPUT

Curie

Darwin

Turing

after the loop, name is TuringNote also that finding the length of an object is such a common

operation that Python actually has a built-in function to do it called

len:

OUTPUT

4len is much faster than any function we could write

ourselves, and much easier to read than a two-line loop. It will also

give us the length of many other things that we haven’t met yet, so we

should always use it when we can.

From 1 to N

Python has a built-in function called range that

generates a sequence of numbers. range can accept 1, 2, or

3 parameters.

- If one parameter is given,

rangegenerates a sequence of that length, starting at zero and incrementing by 1. For example,range(3)produces the numbers0, 1, 2. - If two parameters are given,

rangestarts at the first and ends just before the second, incrementing by one. For example,range(2, 5)produces2, 3, 4. - If

rangeis given 3 parameters, it starts at the first one, ends just before the second one, and increments by the third one. For example,range(3, 10, 2)produces3, 5, 7, 9.

Using range, write a loop that uses range

to print the first 3 natural numbers:

The body of the loop is executed 6 times.

Summing a list

Write a loop that calculates the sum of elements in a list by adding

each element and printing the final value, so

[124, 402, 36] prints 562

Computing the Value of a Polynomial

The built-in function enumerate takes a sequence (e.g. a

list) and generates a new sequence of the

same length. Each element of the new sequence is a pair composed of the

index (0, 1, 2,…) and the value from the original sequence:

The code above loops through a_list, assigning the index

to idx and the value to val.

Suppose you have encoded a polynomial as a list of coefficients in the following way: the first element is the constant term, the second element is the coefficient of the linear term, the third is the coefficient of the quadratic term, etc.

OUTPUT

97Write a loop using enumerate(coeffs) which computes the

value y of any polynomial, given x and

coeffs.

Key Points

- Use

for variable in sequenceto process the elements of a sequence one at a time. - The body of a

forloop must be indented. - Use

len(thing)to determine the length of something that contains other values.

Content from Analyzing Data from Multiple Files

Last updated on 2024-02-23 | Edit this page

Estimated time: 20 minutes

Overview

Questions

- How can I do the same operations on many different files?

Objectives

- Use a library function to get a list of filenames that match a wildcard pattern.

- Write a

forloop to process multiple files.

As a final piece to processing our GDP data, we need a way to get a

list of all the files in our data directory whose names

start with gapminder_ and end with .csv. The

following library will help us to achieve this:

The glob library contains a function, also called

glob, that finds files and directories whose names match a

pattern. We provide those patterns as strings: the character

* matches zero or more characters, while ?

matches any one character. We can use this to get the names of all the

CSV files in the current directory:

OUTPUT

['gapminder_gdp_americas.csv', 'gapminder_gdp_africa.csv', 'gapminder_gdp_europe.csv',

'gapminder_gdp_asia.csv', 'gapminder_gdp_oceania.csv']As these examples show, glob.glob’s result is a list of

file and directory paths in arbitrary order. This means we can loop over

it to do something with each filename in turn. In our case, the

“something” we want to do is generate a set of plots for each file in

our GDP dataset.

Determining Matches

Which of these files is not matched by the expression

glob.glob('data/*as*.csv')?

data/gapminder_gdp_africa.csvdata/gapminder_gdp_americas.csvdata/gapminder_gdp_asia.csv

1 is not matched by the glob.

Minimum File Size

Modify this program so that it prints the number of records in the file that has the fewest records.

PYTHON

import glob

import pandas as pd

fewest = ____

for filename in glob.glob('data/*.csv'):

dataframe = pd.____(filename)

fewest = min(____, dataframe.shape[0])

print('smallest file has', fewest, 'records')Note that the [DataFrame.shape() method][shape-method]

returns a tuple with the number of rows and columns of the data

frame.

PYTHON

import glob

import pandas as pd

fewest = float('Inf')

for filename in glob.glob('data/*.csv'):

dataframe = pd.read_csv(filename)

fewest = min(fewest, dataframe.shape[0])

print('smallest file has', fewest, 'records')You might have chosen to initialize the fewest variable

with a number greater than the numbers you’re dealing with, but that

could lead to trouble if you reuse the code with bigger numbers. Python

lets you use positive infinity, which will work no matter how big your

numbers are. What other special strings does the [float

function][float-function] recognize?

If we want to start by analyzing just the first three files in

alphabetical order, we can use the sorted built-in function

to generate a new sorted list from the glob.glob

output:

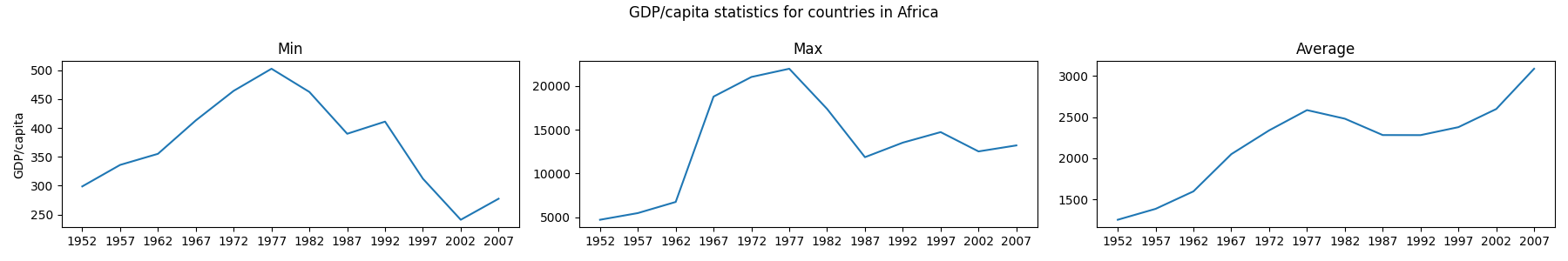

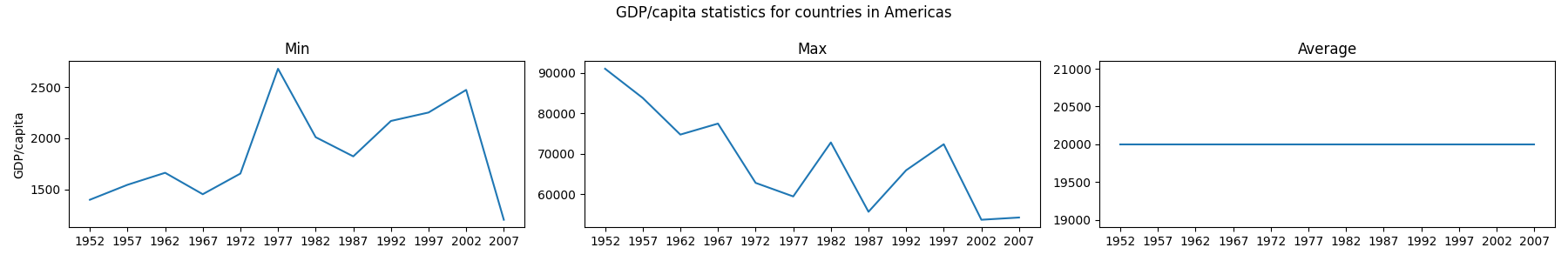

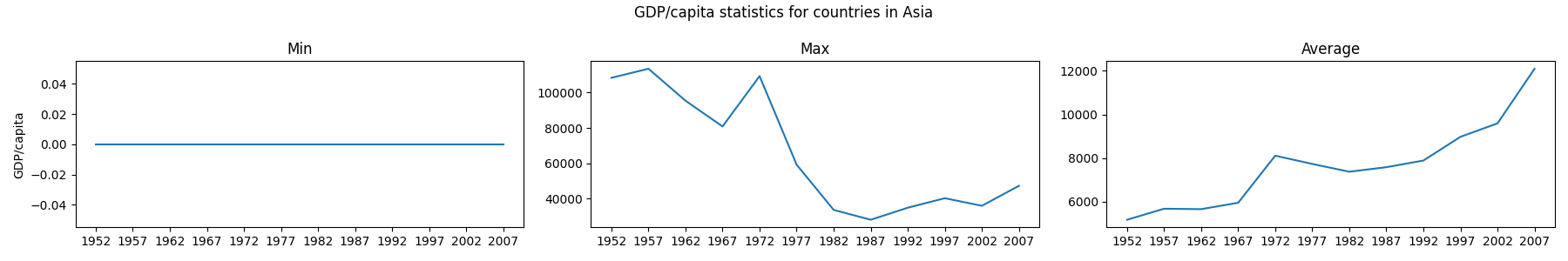

PYTHON

import glob

import pandas as pd

import matplotlib.pyplot as plt

filenames = sorted(glob.glob('data/gapminder_*.csv'))

filenames = filenames[0:3]

for filename in filenames:

print(filename)

continent = filename[14:-4].capitalize()

data_gdp = pd.read_csv(filename, index_col='country')

fig = plt.figure(figsize=(18.0, 3.0))

axes_1 = fig.add_subplot(1, 3, 1)

axes_2 = fig.add_subplot(1, 3, 2)

axes_3 = fig.add_subplot(1, 3, 3)

axes_1.set_title('Min')

axes_1.set_ylabel('GDP/capita')

axes_1.plot(data_gdp.min(axis='rows'))

axes_2.set_title('Max')

axes_2.plot(data_gdp.max(axis='rows'))

axes_3.set_title('Average')

axes_3.plot(data_gdp.mean(axis='rows'))

fig.suptitle('GDP/capita statistics for countries in ' + continent)

fig.tight_layout()

plt.show()OUTPUT

data/gapminder_gdp_africa.csv

OUTPUT

data/gapminder_gdp_americas.csv

OUTPUT

data/gapminder_gdp_asia.csv

The average plot generated for the Americas dataset looks a bit strange. How is it possible that the average value across the years is flat? Also, we find a similar behaviour for the minimum graph for the Asia dataset, where in this case it’s always 0.

From inspecting the data we can see that some entries for the Asia dataset has a 0 value. This may suggest that there were potential issues with data collection. The Americas dataset, however, doesn’t show any clear indication by visually inspecting the data, nevertheless, it seems very improbable that the average remained constant for the whole time.

Comparing Data

Write a program that reads in the regional data sets and plots the average GDP per capita for each region over time in a single chart.

This solution builds a useful legend by using the string

split method to extract the region from

the path ‘data/gapminder_gdp_a_specific_region.csv’.

PYTHON

import glob

import pandas as pd

import matplotlib.pyplot as plt

fig, ax = plt.subplots(1,1)

for filename in glob.glob('data/gapminder_gdp*.csv'):

dataframe = pd.read_csv(filename)

# extract <region> from the filename, expected to be in the format 'data/gapminder_gdp_<region>.csv'.

# we will split the string using the split method and `_` as our separator,

# retrieve the last string in the list that split returns (`<region>.csv`),

# and then remove the `.csv` extension from that string.

region = filename.split('_')[-1][:-4]

dataframe.mean().plot(ax=ax, label=region)

plt.legend()

plt.show()After spending some time investigating the different statistical plots, we gain some insight into the various datasets.

The datasets appear to fall into two categories:

- seemingly “normal” datasets but display suspicious average values (such as Americas)

- “bad” datasets that shows 0 for the minima across the years (maybe due to missing data?) for different countries each year.

Key Points

- Use

glob.glob(pattern)to create a list of files whose names match a pattern. - Use

*in a pattern to match zero or more characters, and?to match any single character.

Content from Making Choices

Last updated on 2024-02-23 | Edit this page

Estimated time: 30 minutes

Overview

Questions

- How can my programs do different things based on data values?

Objectives

- Write conditional statements including

if,elif, andelsebranches. - Correctly evaluate expressions containing

andandor.

In our last lesson, we discovered something suspicious was going on in our GDP data by drawing some plots. How can we use Python to automatically recognize the different features we saw, and take a different action for each? In this lesson, we’ll learn how to write code that runs only when certain conditions are true.

Conditionals

We can ask Python to take different actions, depending on a

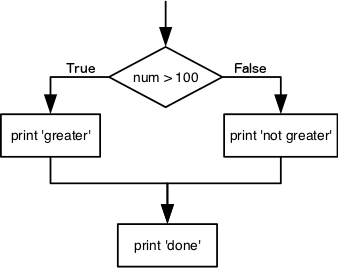

condition, with an if statement:

OUTPUT

not greater

doneThe second line of this code uses the keyword if to tell

Python that we want to make a choice. If the test that follows the

if statement is true, the body of the if

(i.e., the set of lines indented underneath it) is executed, and

“greater” is printed. If the test is false, the body of the

else is executed instead, and “not greater” is printed.

Only one or the other is ever executed before continuing on with program

execution to print “done”:

Conditional statements don’t have to include an else. If

there isn’t one, Python simply does nothing if the test is false:

PYTHON

num = 53

print('before conditional...')

if num > 100:

print(num, 'is greater than 100')

print('...after conditional')OUTPUT

before conditional...

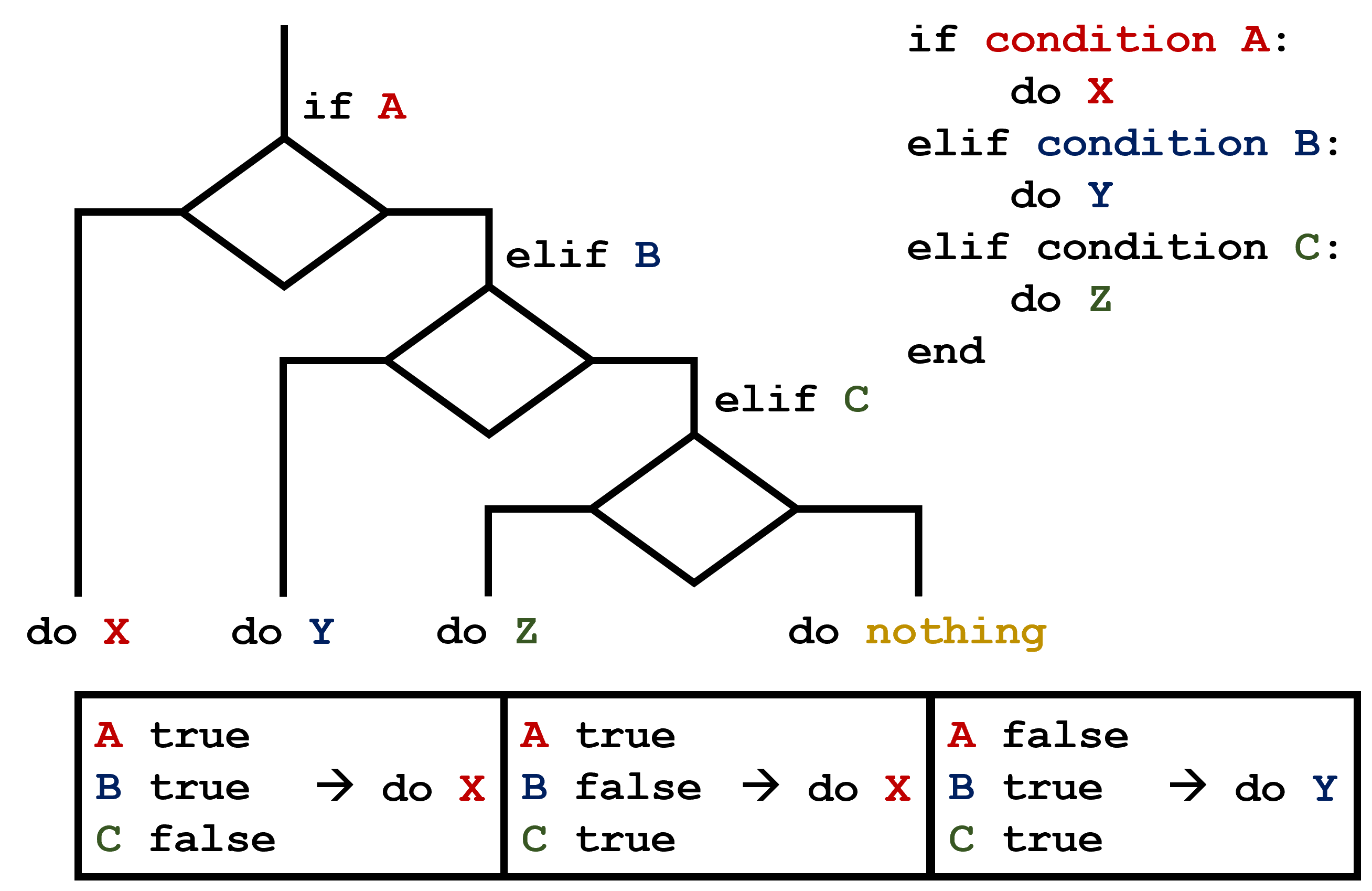

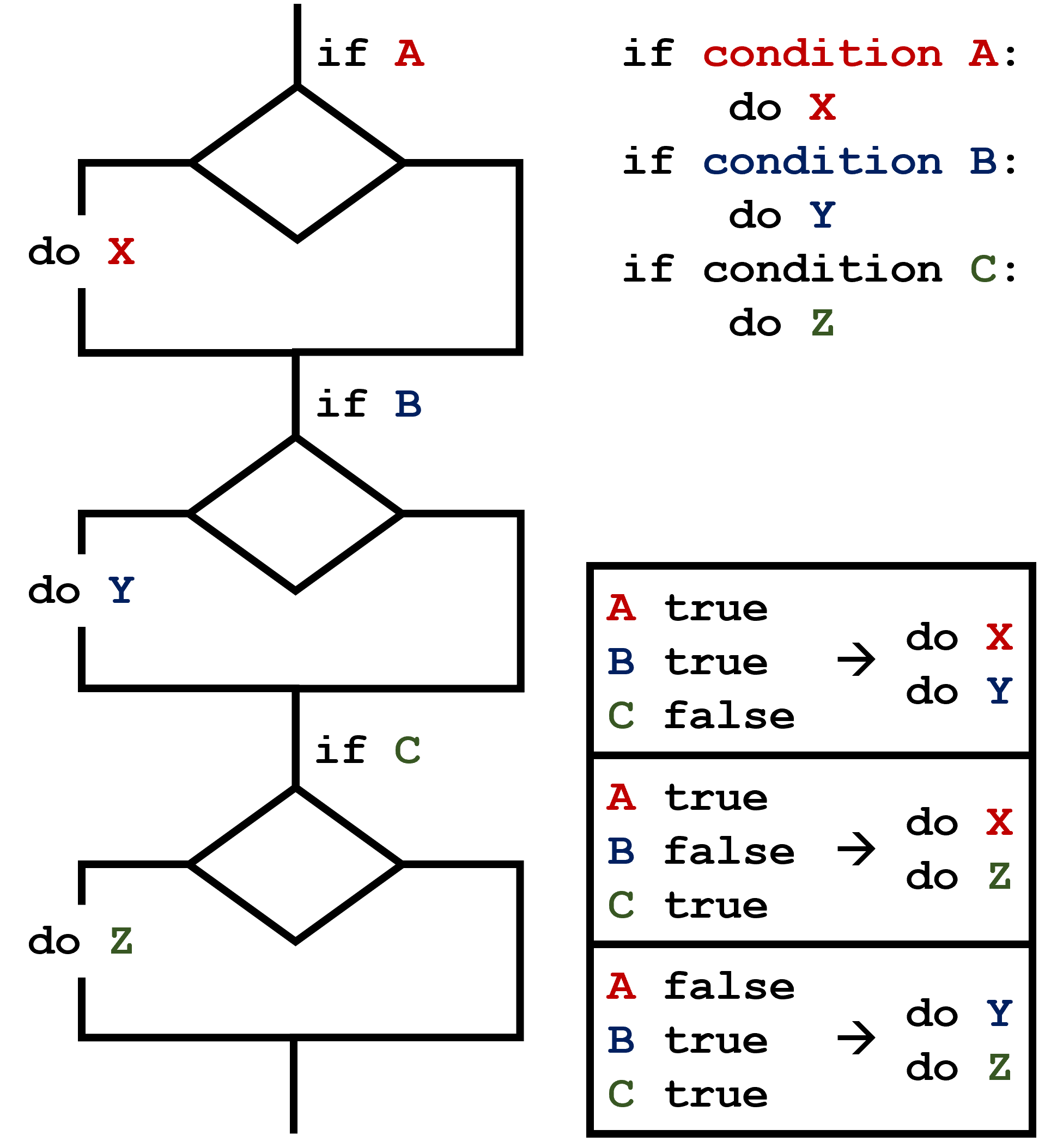

...after conditionalWe can also chain several tests together using elif,

which is short for “else if”. The following Python code uses

elif to print the sign of a number.

PYTHON

num = -3

if num > 0:

print(num, 'is positive')

elif num == 0:

print(num, 'is zero')

else:

print(num, 'is negative')OUTPUT

-3 is negativeNote that to test for equality we use a double equals sign

== rather than a single equals sign = which is

used to assign values.

Comparing in Python

Along with the > and == operators we

have already used for comparing values in our conditionals, there are a

few more options to know about:

-

>: greater than -

<: less than -

==: equal to -

!=: does not equal -

>=: greater than or equal to -

<=: less than or equal to

We can also combine tests using and and or.

and is only true if both parts are true:

PYTHON

if (1 > 0) and (-1 >= 0):

print('both parts are true')

else:

print('at least one part is false')OUTPUT

at least one part is falsewhile or is true if at least one part is true:

OUTPUT

at least one test is true

True and False

True and False are special words in Python

called booleans, which represent truth values. A statement

such as 1 < 0 returns the value False,

while -1 < 0 returns the value True.

Checking our Data

Now that we’ve seen how conditionals work, we can use them to check

for the suspicious features we saw in our inflammation data. We are

about to use functions provided by the pandas module again.

Therefore, if you’re working in a new Python session, make sure to load

the module with:

From the first set of plots, we saw that the minimum and average exhibit a strange behavior for some of our dataset. Wouldn’t it be a good idea to detect such behavior and report it as suspicious? Let’s do that! However, instead of checking every entry manually, let’s check if the minimum and the maximum for the minimum across years is the same.

PYTHON